The NBA, over the past decade, has undergone a revolution in basketball offense. With the increase in long-range shooting ability, NBA teams have built their offenses around deep shooting and the spacing that it opens up. As a result, observers claim that even the worst teams today are measurably more efficient and threatening on offense than some of the best teams from twenty years ago.

The main benchmark of a good team, then, is how complete they are at both offense and defense. As it turns out, it is much harder to create a consistently elite defense instead of an elite offense – and this has become the main divide between a team that contends for championships and a team that can be fun in spurts but still ends up languishing as a bottom-feeder in the crevices of the league.

What I’ve described is what cybersecurity might look like going forward.

Actualizing myths

Every few months, an AI company announces a model so powerful, unprecedented, and risky that it must be handled with special care. This has become its own meme, where a company says the model is astonishingly capable, darkly hints at misuse risks, then wraps the whole thing in the language of saintly responsibility.

Are you feeling it yet, the awe and gratitude that you are simultaneously expected to exude?

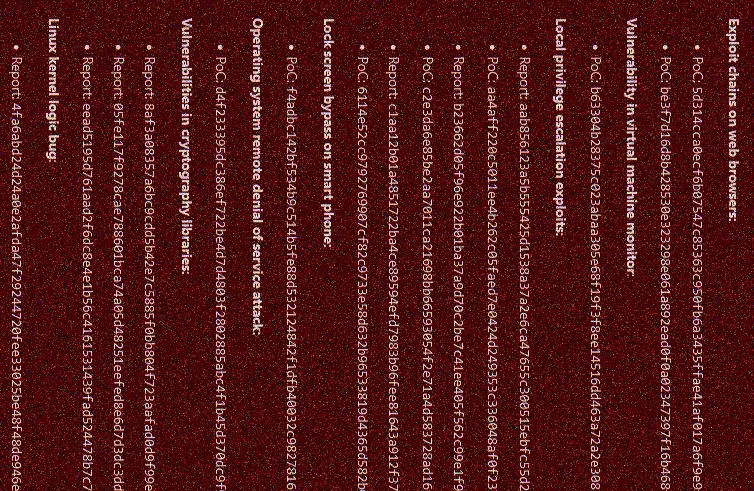

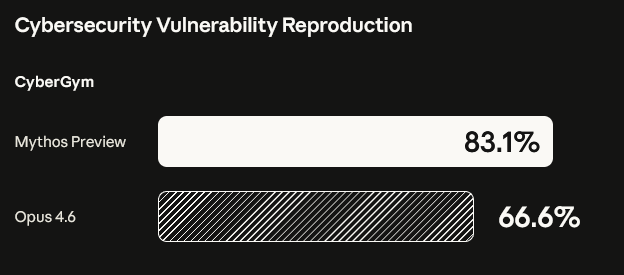

So, a reflexive eye roll at new announcements is understandable. But Anthropic’s new Claude Mythos may seem more worth paying attention to than the usual round of theater, because the hype is less about its ability to chat, write, or even code in the usual sense – while it may perform well across a range of benchmarks, the capability provoking the most alarm is its aptitude for vulnerability discovery and hacking. Unlike a lot of AI promises, this is an area where frontier models may be getting more concretely and practically dangerous.

The basic idea behind Project Glasswing is therefore straightforward. Anthropic says its model is unusually good at finding serious software vulnerabilities and helping develop exploits (yes, this sounds like hype). But instead of shipping it widely, they are giving access to major companies and key infrastructure operators first, so they can patch their systems before this sort of capability spreads further (okay, maybe there is more meat here).

That probably is the rational move – better that the big tech firms, cloud providers, and banks start using this stuff defensively before the rest of the world catches up. But even if they are acting sensibly here, there is a broader implication that is less reassuring: if advanced offensive capability diffuses faster than practical defensive support for the long tail, AI could make good cybersecurity more unequal.

Cybersecurity for me (but not for thee), o ye of little capital

For years, AI firms have loved to propagate “democratization.” AI will democratize creativity, education, software, and so on. Much of that has been overstated, but there is one area where AI may truly be democratizing capabilities: cyber offense.

You do not need every random user to become a top-tier exploit developer for this to matter. You just need the expertise threshold to drop, for it to become easier for mediocre operators to do things that used to require more patience or more specialized knowledge – and more fundamentally, you only need a dabbling of a motivation to begin pursuing something like that.

Maybe you get curious one day about how you can steal your parent’s online bank info to buy those luscious microtransactions. Or, you grow suspicious of your spouse and you try to break into their emails (just out of curiosity, honey!). Or maybe you are so frustrated with being called a slacktivist that you endeavor to make some real change, but still from the comfort of your bed. That push, along with a few LLM-assisted searches later, you find yourself on the cusp of learning how to do something illegal.

The defensive side of the equation is not symmetrical. Cyber risk has never depended solely on the existence of exceptional attackers, and is often driven by the scale and frequency of competent-enough attacks against widely exposed, weakly maintained systems. If AI increases that volume while compressing the time between vulnerability discovery and exploitation, then defenders with thin margins suffer first and most.

Consider that:

- Large firms can absorb new paradigms. They have red teams, bug bounties, secure development cycles, relationships with vendors, patch pipelines, lawyers and incident-response playbooks. Give them a model that can surface difficult vulnerabilities at scale, and they can do something with it.

- Smaller shops cannot. A founder with four engineers and a thin runway does not become resilient just because a frontier lab says the industry is entering a new era of machine-speed vulnerability discovery. That dev team becomes more exposed – and so does the local government office, a nonprofit, an overstretched startup, or the maintainer of some open-source dependency that half the internet relies on.

So what starts to emerge is a split. The big players get machine-assisted defense, while everyone else gets machine-assisted offenses drawn up against them.

This asymmetry is particularly compounded by how software ecosystems are interconnected. Small actors are not isolated from large ones; they are often embedded inside their supply chains and dependency trees – the underfunded maintainer of an open-source library, the contractor with lax security, the small-town IT office still running Windows XP, the small healthcare network without a mature backup and recovery process – all of these can become the vulnerabilities through which wider harm propagates.

A world in which elite firms are better able to defend themselves does not necessarily become safer overall if the long tail of dependencies becomes easier to exploit. In fact, the concentration of defensive capacity at the top can mask the fragilities underneath.

A fair counterpoint is that AI can also help smaller actors identify threats. That is true, but identification is just the barest minimum of steps. They may still lack the staff, time, money, or authority to fix them at the necessary speed – and we still end up in a world where AI disproportionately rewards those who can operationalize it.

Trust the [institutional] process?

I raised earlier that elite defenses are harder to create in basketball. Yes, tactical and analytical changes that are easier to mimic (“just shoot more threes”) have raised the offensive baseline across the NBA. Yet championship contention still depends on a narrower and harder-to-build set of defensive capabilities: coordination, discipline, depth, switching ability, error recovery, and the ability to withstand sustained pressure from multifaceted offenses.

Cybersec is unfortunately not quite basketball, but the logic is close.

Offensive improvements can diffuse broadly and quickly because they are modular, visible, and often easier to imitate. Defensive excellence is harder because it is organizational – it depends on process, incentives, maintenance, and resilience under stress. Those qualities are slower to build and are much more unevenly distributed.

Yes, there can be a set of options, ranging from subsidized security support for smaller developers, direct funding for critical open-source maintenance, and shared scanning and remediation infrastructure. There are public options such as CISA’s Cyber Hygiene, but these could be applied more broadly and be augmented services that could fix things for parties who would otherwise be outmatched.

At the end of the (zero) day, this is both a market structure issue and a diffusion problem. If AI reduces the cost of finding and exploiting weaknesses faster than it reduces the cost of repairing them, then cyber insecurity will not be distributed evenly. It will accumulate where institutional capacity is already weak.

This is not a ‘gotcha’ piece that illuminates something that Anthropic and other frontier research institutions have not considered. It is to illustrate that even with the best intentions from them and other corporate actors, advanced cybersecurity may still end up becoming a concentrated luxury that is difficult to diffuse.